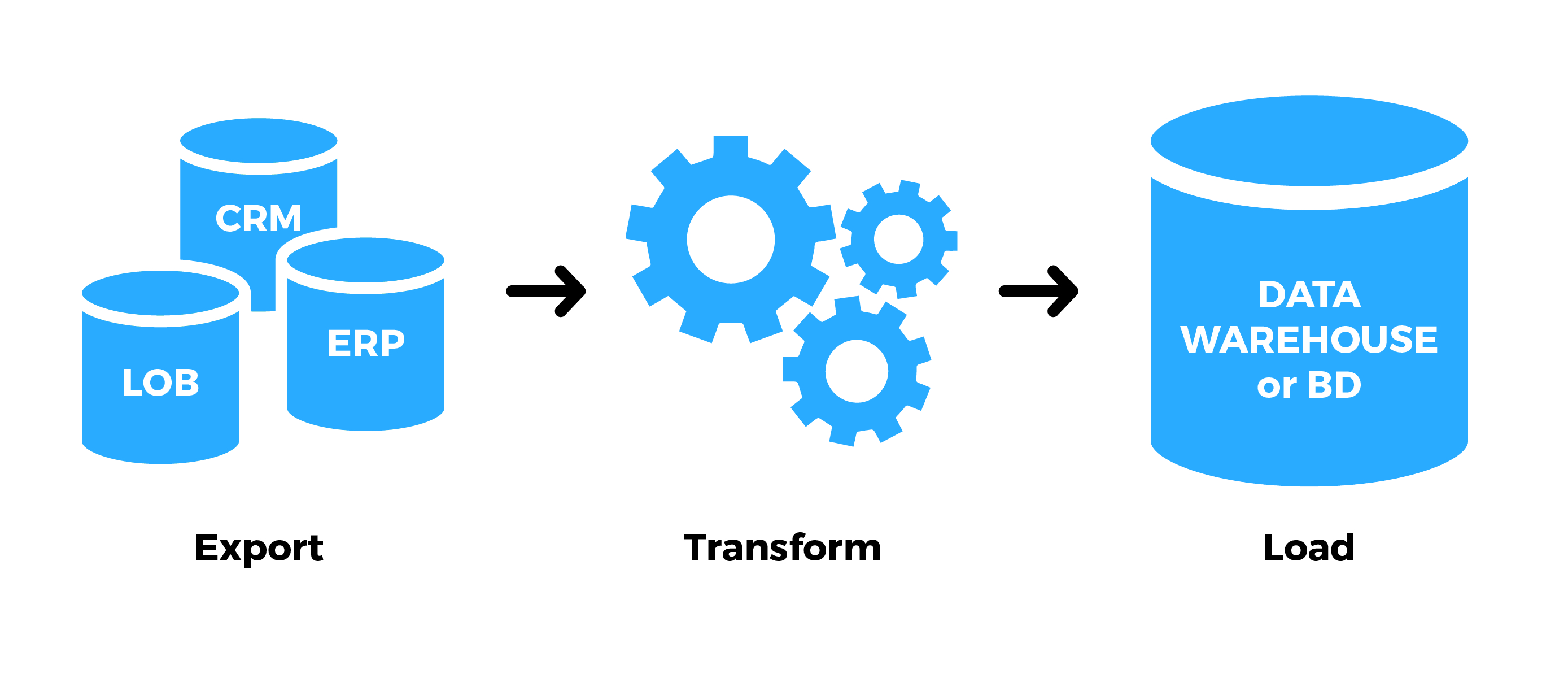

Business intelligenceīI is an umbrella term that includes infrastructure, tools, and software systems, all of which rely on the data ETL replicates. Thanks to faster and more efficient ingestion, organizations can make big data available to the people and systems that transform raw information into business insights. Big dataīig data describes the availability, diversity, and volume of data that modern enterprises handle. ETL's place in the data landscapeĮTL is connected to many other important data concepts and practices, including big data, business intelligence, machine learning, metadata, data quality, and self-service analytics. Once an ETL process has replicated data to the data warehouse, analytics and business intelligence teams - data analysts, data engineers, data scientists - and SQL-savvy business users can all work with it. ETL is the set of methods and tools used to populate and update these warehouses. Enterprises use data warehouses to store and access cleansed, consistent, and high-quality business data, sourced from diverse origin systems. Sign up for free → Contact Sales → Why use ETL?ĭata warehousing and ETL make up two layers of the data analytics stack. OLAP systems may also serve as data repositories for manual machine learning or predictive analyses. For example, in a data warehouse, the same records might exist as part of a snapshot table including a week of data and in a larger archival table containing all loaded historical records.Īt this stage the data is available for online analytical processing (OLAP) systems, which are optimized for queries rather than transactions. The loading stage involves writing data into a target, which may be a data warehouse, data lake, or analytics application or platform that accepts direct data feeds.ĭata can exist in multiple final states and locations within a destination. The transformation step of the ETL process can ensure that data enters the data warehouse in a required format and structure, allowing data analysts to work with it more easily.įor example, an ETL process can extract web data such as JSON records, HTML pages, or XML responses, parse valuable information or simply flatten these formats, then feed the resulting data into a data warehouse. This data may be unstructured and therefore unsuitable for use in data analytics processes.

Most organizations now access and use diverse data sources, from operational, financial, and sales databases to application APIs and scraped web data. Some of the most important transformations are mapping data types from source to target systems, flattening semistructured data intended for a relational database, and data validation. Transformations fall into three general categories: validating, cleansing, and preparing data for analysis. Business requirements and the characteristics of the destination system determine what transformations are necessary. Transformation alters the structure, format, or values of the extracted data through different data transformation operations. Other potential sources include flat files such as HTML or log files. The transactional systems may run on local servers or on SaaS platforms. These online transaction processing (OLTP) systems are optimized for operational data defined by schemas and divided into tables, rows, and columns. Many enterprise data sources are transactional systems where the data is stored in relational databases that are designed for high throughput and frequent write and update operations. The extraction step focuses on collecting data. A data engineer may extract source data to a temporary location such as a data lake or a staging table in a database in anticipation of the steps that follow. Let's take a more detailed look at each step.Įxtraction involves accessing source systems and reading and copying the data they contain. It encompasses aspects of obtaining, processing, and transporting information so an enterprise can use it in applications, reporting, or analytics. What is ETL?ĮTL (extract, transform, load) is a general process for replicating data from source systems to target systems. Maintaining a data warehouse requires building a data ingestion process, and that in turn requires an understanding of ETL, its use cases, and its relationship with other components in the data analytics stack. Understanding ETL (extract, transform, load)īig data and cloud data warehouses are helping modern organizations leverage business intelligence (BI) and analytics for decision-making and new insights.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed